With the breadth of AI capabilities now available in AWS, organizations aren’t struggling to access AI in the cloud, they’re struggling to bind it for safe use in a way that doesn’t slow down development. At first glance, the governance model might look straightforward. You determine your set of approved models, define guardrails in your application layer, and lock down access to AI resources through IAM policies. But once these policy decisions are made, how do you ensure these controls are enforced across the cloud, and not just in individual AI workloads? As AWS’s managed AI services have matured, new features have become available that make this type of org-wide enforcement easier to implement than ever before.

In this article, we’ll examine how we can use AWS Organizations for much of this work, using a combination of Service Control Policies (SCPs) and AI-specific org policies.

Restricting AWS-Managed MCP Server Use

At re:Invent 2025, Amazon launched a number of AWS-managed remote MCP services. These included a generic AWS MCP Server, as well as service-specific MCP servers for SageMaker, EKS and ECS.

These MCP servers support both read and write access to the AWS control plane. For example, using the AWS MCP server’s aws__call_aws tool, an agent can make arbitrary AWS API calls. The agent still needs to be configured with an AWS identity, and that identity must have the requisite IAM permissions to perform the API operations they’re using the tool for.

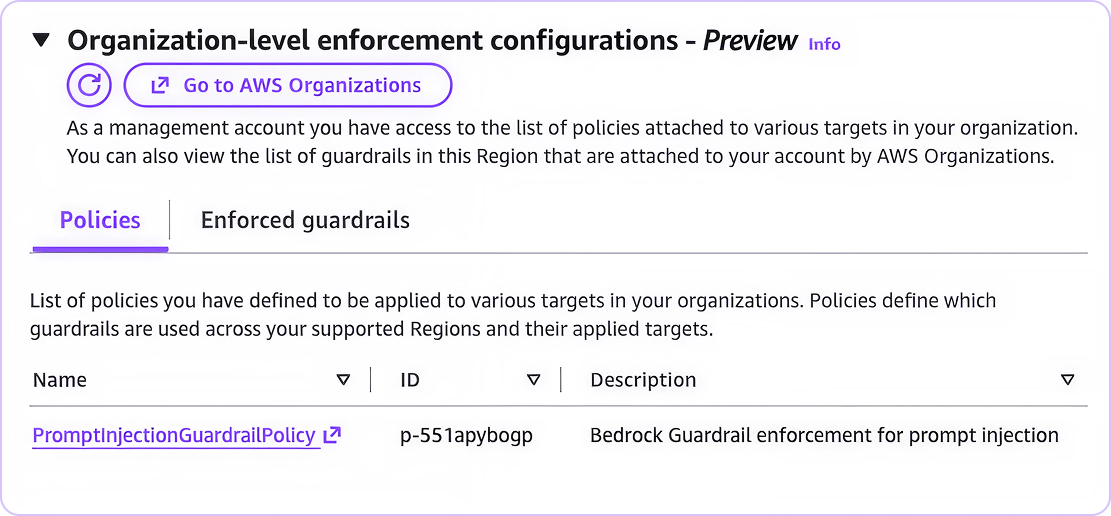

Organizations may want to disable the use of Agentic AI for control plane actions, so AWS provided mechanisms to prevent this type of control plane action. Originally, using these MCP servers was gated by IAM permissions. For example, to use the aws__call_aws tool, an agent would need the aws-mcp:CallReadWriteTool permission in addition to the permissions associated with the API calls they were making.

Recently though, AWS has changed their approach. Now, API access via MCP server can only be controlled via condition key. The boolean ViaAWSMCPService condition key can be used to target MCP use at large, while aws:CalledViaAWSMCP can be used to target specific MCP servers from the four currently available.

The following SCP can be used to prevent access to the AWS control plane through AWS’s managed MCP servers:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "DenyWhenAccessedViaMCP",

"Effect": "Deny",

"Action": "*",

"Resource": "*",

"Condition": {

"Bool": {

"aws:ViaAWSMCPService": "true"

}

}

}

]

}After attaching this SCP to an AWS account, attempting to call the AWS API through the managed MCP server (which you can do manually using Python) fails:

~ $ cat <invoke_via_mcp.py import sys from mcp_proxy_for_aws.client import aws_iam_streamablehttp_client from strands.tools.mcp import MCPClient mcp_client_factory = lambda: aws_iam_streamablehttp_client( endpoint="https://aws-mcp.us-east-1.api.aws/mcp", aws_service="aws-mcp", aws_region="us-east-1" ) with MCPClient(mcp_client_factory) as mcp_client: resp = mcp_client.call_tool_sync( tool_use_id='something', name='aws___call_aws', arguments={ 'cli_command': sys.argv[1] } ) print(resp) EOF ~ $ python3 invoke_via_mcp.py "aws s3 ls" {'status': 'error', 'toolUseId': 'something', 'content': [{'text': "Error calling tool 'call_aws': Error while executing 'aws s3 ls': \nAn error occurred (AccessDenied) when calling the ListBuckets operation: User: arn:aws:sts::992382794994:assumed-role/TestRole/nigel.sood@sonraisecurity.com is not authorized to perform: s3:ListAllMyBuckets with an explicit deny in a service control policy\n"}]}

Some Caveats…

This type of control may seem extremely useful for limiting the privilege of an AI agent – and in some cases it will be. This on its own however does not solve the problem of limiting agentic access to the cloud. An agent with access to the AWS API only through the managed MCP servers will be bound by these controls, but more loosely controlled agents can find trivial ways around this control. Autonomous agents and developer assistants like Kiro or Claude Code that have direct command-line access can invoke AWS CLI commands locally rather than passing commands to the managed MCP server, bypassing this control.

Distinguishing between users and agents acting on behalf of users is a problem that does not yet have an easy solution. In the meantime, it may be more practical to pursue an approach that treats any user action as potentially AI-assisted, and focus more on the privileges an identity legitimately needs rather than how its privilege is being leveraged.

Bedrock Guardrails & Bedrock Policies

Amazon Bedrock Guardrails exist to help safeguard input and output of AI systems. There are a number of configurable features present in these guardrails:

- Content Filters: Preset filters for categories like hate speech, sexual content or prompt injection

- Denied Topics: User-defined topics to restrict or block

- Word Filters: User-defined words to restrict or block (like profanity, or business competitor names)

- Sensitive Information Filters: For filtering or masking PII

- Contextual Grounding Checks: For validating model output against a reference source

- Automated Reasoning Checks: For identifying and filtering out logical inconsistencies or unfounded assumptions

Originally, bedrock guardrails were just account-level resources that had to explicitly be linked to AI workloads at the application layer. Bedrock agents and flows could have guardrails associated with them and direct model invocations could leverage pre-configured guardrails, but this wasn’t required. Additionally, anyone with direct model access could invoke the models directly via InvokeModel without guardrails.

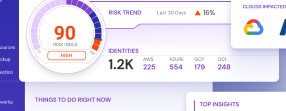

Recently however, AWS released Amazon Bedrock Policies, a mechanism to enforce specific guardrail usage at the Organization level. These allow guardrails defined in the org management account to be automatically applied across the organization. Similar to other org policies like SCPs, these can be attached at any point in the org hierarchy.

Setup occurs in three steps:

- Defining the guardrail

- Sharing the guardrail with the organization

- Creating and attach the bedrock policy

While AWS has changed how guardrails can be enforced, guardrails are still regional regional resources even when defined in the org management account, and this is reflected in the Bedrock Policy Syntax. To configure a baseline guardrail in a particular region, the guardrail must either exist in that region or use a cross-region guardrail inference profile that covers that region.

Sample Bedrock Policy: Blocking Prompt Injection Attacks

The types of harmful content and PII that organizations will want to block or filter will vary wildly depending on what types of data AI applications are designed to process. Depending on the topics of content being ingested, aggressive filtering can often block legitimate content. The threshold levels for these types of guardrails will often need to be tailored to their specific applications.

What we can often do at a more baseline level is add preventative measures against prompt-injection.

Step 1: Define the Guardrails

This example shows the setup for baseline guardrails in us-east-1 and us-west-2. Add or remove regions as required.

In the org management account, define the bedrock guardrail and create a version for it:

REGIONS=("us-east-1" "us-west-2")

ARN_FILE="guardrail_arns.txt"

: > $ARN_FILE

for REGION in "${REGIONS[@]}"; do

GUARDRAIL_ARN=$(aws bedrock create-guardrail \

--region "$REGION" \

--name "prompt_injection_guardrail" \

--blocked-input-messaging "Prompt injection attempt detected" \

--blocked-outputs-messaging "Prompt injection attempt detected" \

--content-policy-config '{

"filtersConfig": [

{

"type": "PROMPT_ATTACK",

"inputStrength": "MEDIUM",

"outputStrength": "NONE"

}

]

}' \

--query "guardrailArn" \

--output text)

GUARDRAIL_VERSION=$(aws bedrock create-guardrail-version \

--region "$REGION" \

--guardrail-identifier "$GUARDRAIL_ARN" \

--query "version" \

--output text)

GUARDRAIL_VERSION_ARN="${GUARDRAIL_ARN}:${GUARDRAIL_VERSION}"

echo "$REGION $GUARDRAIL_ARN $GUARDRAIL_VERSION_ARN" >> "$ARN_FILE"

done

Step 2: Share the Guardrails with the Entire Organization

At the moment, this can only be done from the console and needs to be completed for each region using the guardrail ARNs output from step 1. First, we need to collect some required values, but we rely on the output file from step 1 to help with most of this:

ARN_FILE="guardrail_arns.txt" while read -r REGION GUARDRAIL_ARN GUARDRAIL_VERSION_ARN; do echo "ARN for region $REGION: $GUARDRAIL_ARN" done < "$ARN_FILE" ORG_ID=$(aws organizations describe-organization \ --query "Organization.Id" \ --output text) echo "Org ID: $ORG_ID"